Automatic Panorama Stiching

Written on November 8th, 2018 by Andrew Campbell

This post explores automatic panorama construction in Python using OpenCV. Specifically, we explore how to use feature descriptors and keypoint matching to recover homographies between images, then warp and blend them into a mosaic.

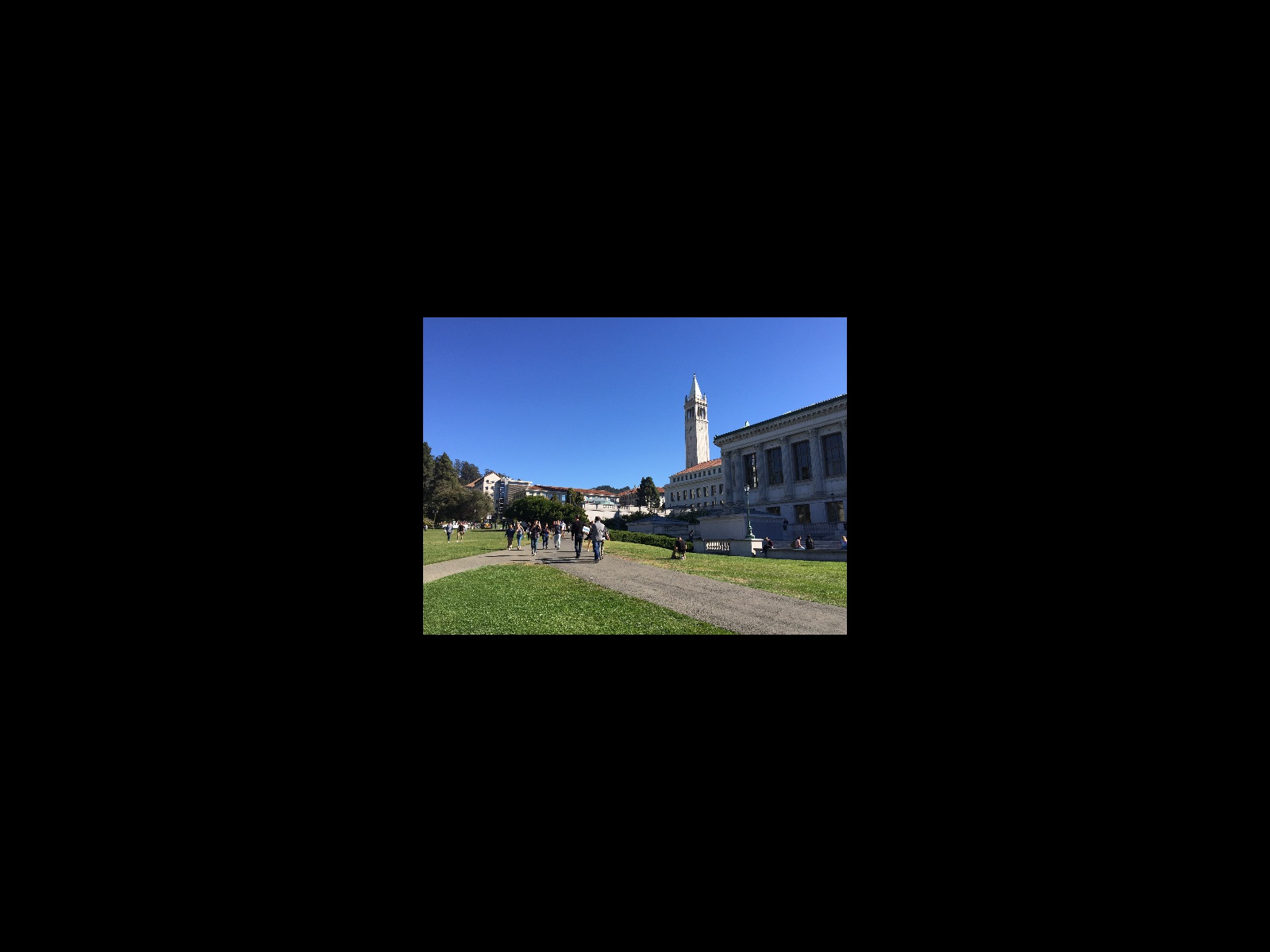

Note: This was originally done as part of a project for CS194-26, a course on computational photography at UC Berkeley.

Introduction

A panorama is a wide-angle view of a space. The most common method for producing panoramic images is to take a series of pictures with slightly overlapping fields of view and stitch them together. There are three main projection modes used in stiching images: spherical, cylindrical, and perspective. Spherical projection tries to align and transform the photos as if they were all plastered onto the inside of a sphere; it works well for 360 panoramas. Cylindrical projection projects the panorama as if it were placed on the inside of a cylinder; it works well for very wide panoramas and is used by most smartphone apps. Perspective projection projects the panorama as though it were mapped on a flat surface. This post will explore perspective projection, which is the easiest to implement.

Specifically, we will take as input three photos with the same point of view but with rotated viewing directions and with overlapping fields of view. Then we will use point correspondences to recover homographies from the left and center images and center and right images. We then perform a projective warp on the left and right images. Finally, we blend them together to create a panorama.

Recovering Homographies

Any two images of the same planar surface in space are related by a homography. The 3 x 3 homography matrix has the following form:

It has eight degrees of freedom (as the last entry is fixed to be 1) and thus in principle only requires four point correspondence pairs to be recovered. However, this approach is prone to noise and in practice we find least square regression on many pairs of points works better. Given pairs of points for the first image and second image , we wish to find such that

is minimized. It can be shown through some algebra that the relevant matrix equation is

We can solve for using least squares to recover .

To obtain point correspondences, we manually record coordinates of the same features in both images. This is a tedious task; we will automate the process later in the post. I found that using 20 point correspondences was sufficient.

Image Rectification

To demonstrate how projective transforms work, we will first look into image rectification: given an image containing a planar surface, warp it so that it is frontal-parallel. We simply select 4 points representing the corners of the plane in the original image and choose the correponding points in the output to be the corners of a rectangle.

The warping procedure is simple: for every coordinate value in the output result, multiply by the (inverse) homography matrix, taking care to normalize to be , to recover the “lookup” coordinates in the original image. We perform interpolation to avoid aliasing.

Some examples are shown below.

Mosaics

Given three images with the same point of view but with rotated viewing angles and overlapping fields of view, we can create a mosaic. We can recover the homography transformation as detailed above, and warp the left and right images appropriately. Note that in order to capture all of the warped result, we first create a large blank canvas and place the image in the center.

In order to blend the resulting warps, we can simply use alpha blending by multiplying the image by a feathered mask. Some example masks for the left, center, and right images, respectively, are shown below.

Results

Automatic Stitching

Manually picking point correspondences is a tedious task. We will use a (simplified) implementation of Multi-Image Matching using Multi-Scale Oriented Patches to automate this process.

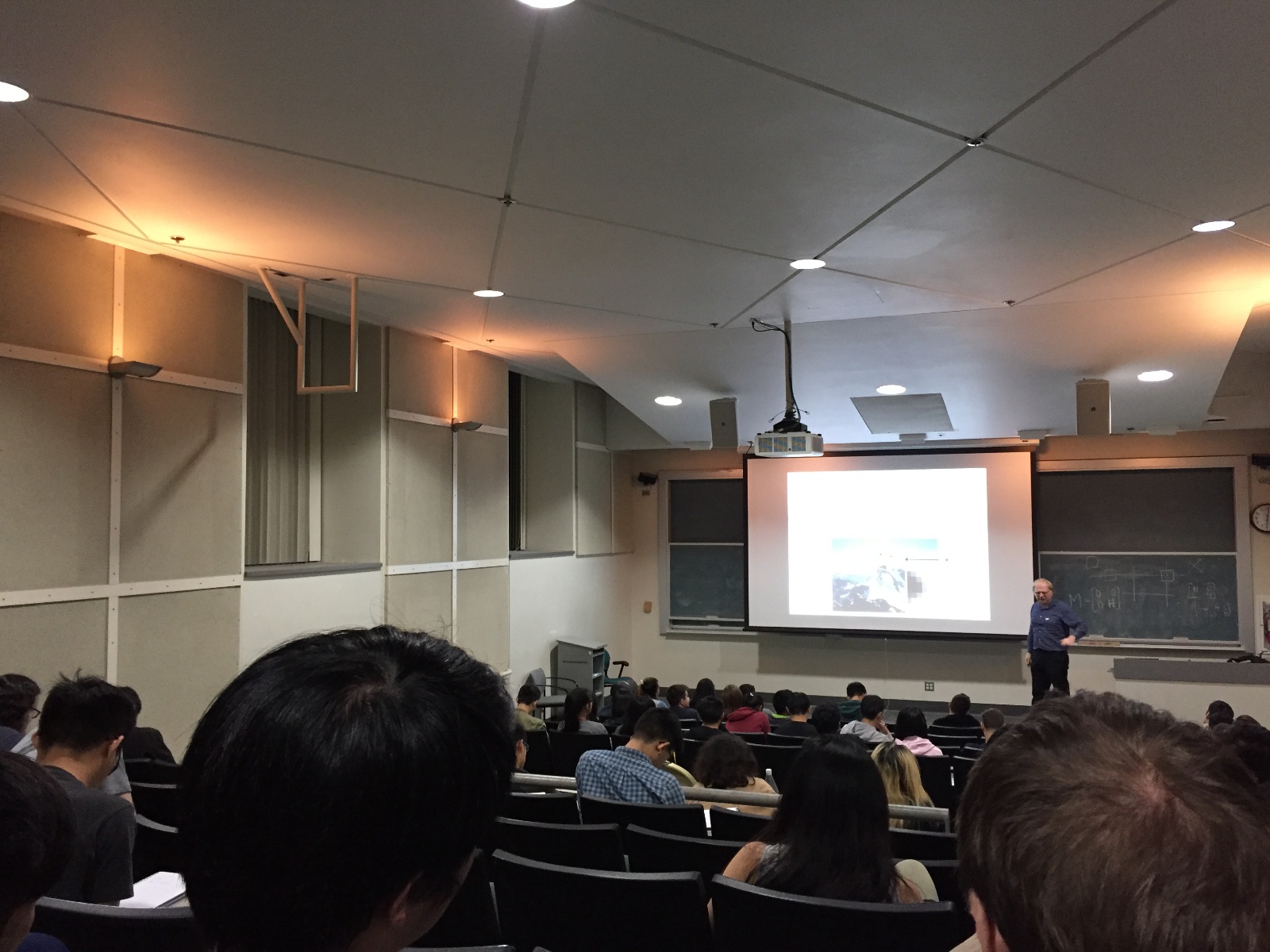

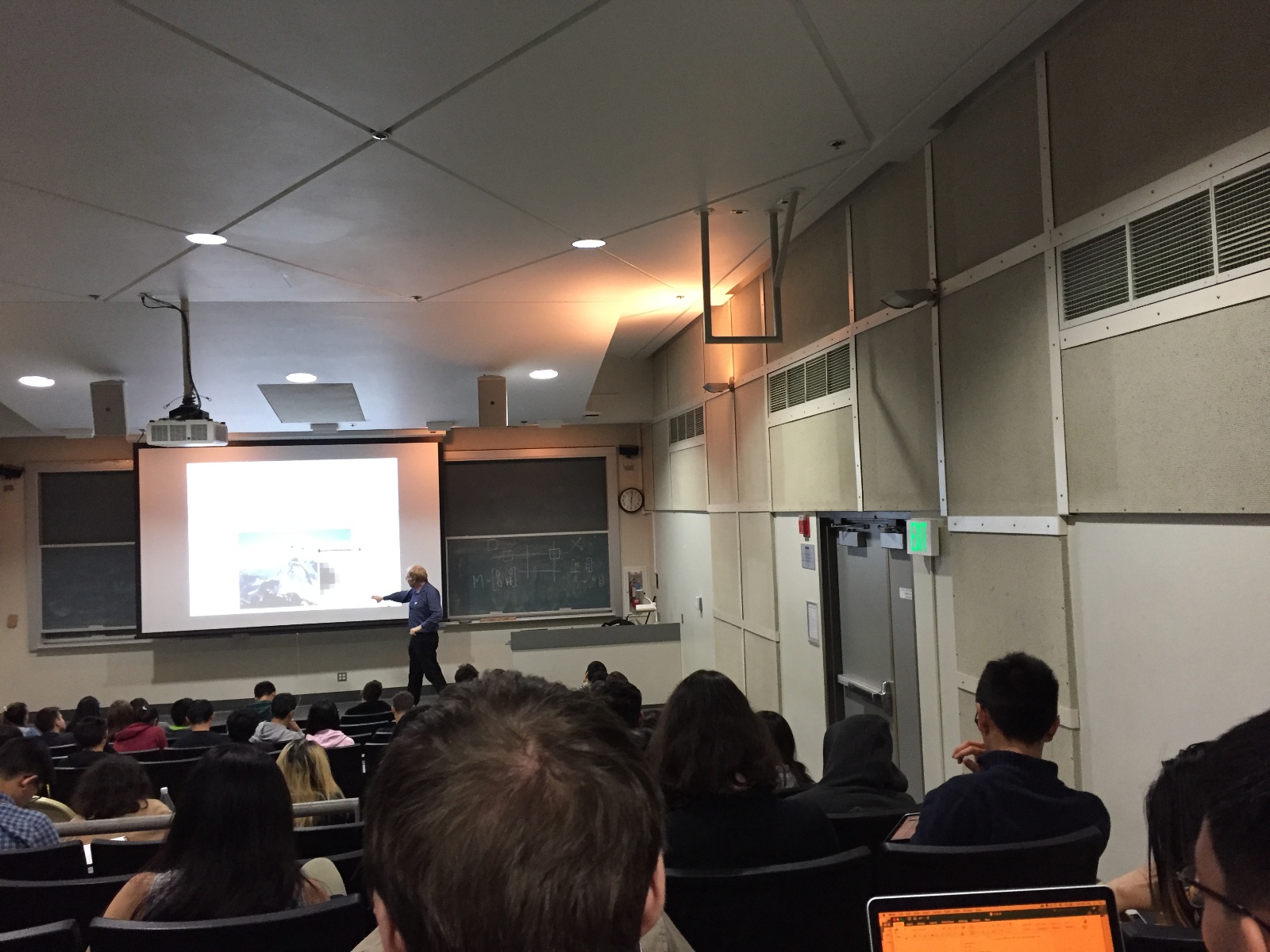

Harris Corners

The first step in finding matches between images is to generate potential points of interest in the images. The Harris Corner Detector is an algorithm appropriate for this task; it finds corners in an image, or points whose local neighborhoods stand in two different edge directions. I used the corner_harris and corner_peaks functions from the skimage.feature library to do the work here. corner_harris returns a response matrix representing the corner strength at every pixel coordinate in the image, which will be useful later.

Shown below are two images with their Harris corners overlaid in yellow.

Adaptive Non-Maximal Suppression

As we can see, there are a lot of Harris corners found. The computational cost of matching is superlinear in the number of interest points, so it is desirable to limit the maximum number of interest points extracted from each image. At the same time, it is important that interest points are spatially well distributed over the image. The algorithm presented in the paper, Adaptive Non-Maximal Suppression or ANMS, solves this problem by selecting the points with the largest values of , where is the minimum suppression radius of point :

Here, is the set of all interest points and represents the corner response of point (which we get from response matrix we retained from the previous step).

In simpler terms, for each point, we find the minimum radius to the next point such that the corner response for the existing point is less than some constant multiplied by the response for the other point. Using and keeping only the top 500 points, the corners left are shown below.

Feature Descriptor Extraction

We can now use our interest points as feature descriptors. We do this by extracting a 40x40 patch centered about each point and downsampling the patch to 8x8 - this is done to eliminate high frequency signals. We additionally bias/gain normalize the patches by subtracting the mean and dividing by the standard deviation. This makes the features invariant to affine changes in intensity (bias and gain).

Feature Matching

The next step is to find geometrically consistent feature matches between two images. First we vectorize the feature descriptors extracted in the last step. Then we compute the squared distance from every feature in the first image to every feature in the second image (essentially a nearest-neighbor search). We use the Lowe ratio test: if the ratio of the distance to the 1-NN over the distance to the 2-NN is less than some threshold, we consider the pair to be a match. The rationale is that true feature matches have a single good match, not multiple. I found that a threhold value of worked well. The matches are shown below.

RANSAC

The feature matches computed above look quite good, but there is a noticeable outlier in the left image. There is a feature point on the the pedestrian’s arm in the left image with no corresponding match in the right image. The least-squares homography calculation is highly sensitive to outliers, so we must reject them first. To that end, we use RANSAC, or random sample concensus. We choose 4 potential matches at random from the first image and compute an exact homography . We then warp all of match points from the first image according to and compute the SSD error between them and the real match points in the second image. Define inliners to be the set of points whose error is less than some threshold. Repeating this process several times, we keep track of the largest set of inliners and retain them as our true feature matches.

I used an error threshold of and 100 iterations. The matches left after RANSAC are shown below.

As we can see, the outlier(s) have been discarded.

Having successfully recovered point correspondences automatically, we can now proceed as in Part 1 to stich the images together.

Results

Below we compare the (cropped) mosaics resulting from automatic feature matching (left) and manual feature feature mapping (right) from before.

Because this project may be reused in the future, I cannot make the code public. I will make it available if I obtain permission in the future.

© Andrew Campbell. All Rights Reserved.